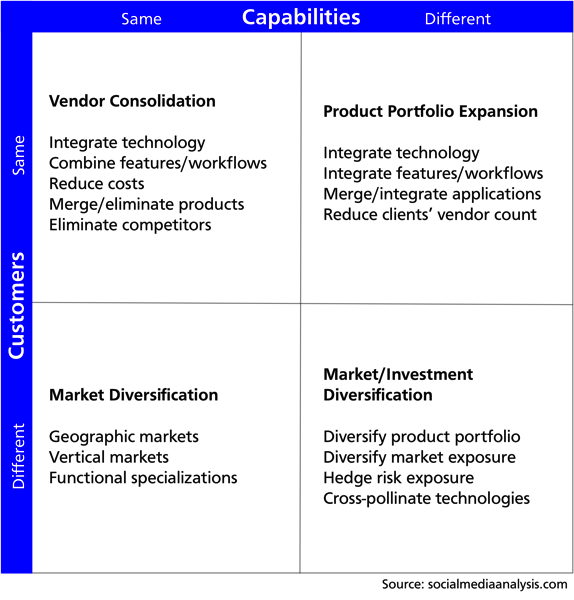

It's January, which means that I've been working on my annual posts on investments and M&A in the social media intelligence market. As always, I find myself mentally dividing the transactions into several buckets: the serial acquirers, the complementary products, the geographic expansions. While I was working on last year's post, I sketched out a matrix to summarize some of the logic of what I was seeing. This year, I'm sharing it with you.

The matrix is built on two variables: customer base and company capabilities. For each, are the merging companies the same or different? Combined, these go a long way in understanding the logic of a deal.

Customers

Start with customers, which might be characterized by industry, location, functional role, or (likely) a combination of these. If the companies serve the same customers, does the combination bring new capabilities to those customers, or is it more about scale or reducing competition? If the companies serve different customers, is the combination about access to new markets or diversifying more generally?

Capabilities

Second, compare the companies' capabilities, especially their products, services, and underlying technologies. If the companies have similar strengths, do they bring different customers or markets to the combination? If they bring different qualities, do their strengths combine to better serve an overlapping customer base, or do they do different things for different customers?

Analyzing the Matrix

At one extreme, companies that have the same capabilities and the same target customer combine to build scale and consolidate their position in the market. At the other, dissimilar companies serving different customers may combine as an investment strategy or to create something entirely new. The Same/Different corners, on the other hand, represent the most-common deal logic, in which a larger company acquires a capability or customer segment.

Every year, I hear from people who expect a big increase in sector consolidation this year. Which types do you expect to see?

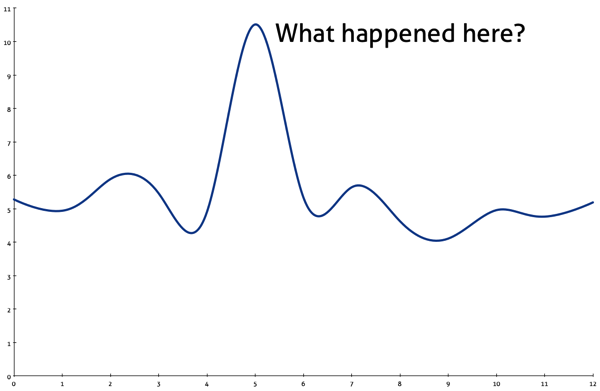

Everyone loves a chart that answers a key question, but I particularly like the ones that make you think: Why did that happen? What changed? What are we missing? What happens next?

Everyone loves a chart that answers a key question, but I particularly like the ones that make you think: Why did that happen? What changed? What are we missing? What happens next?

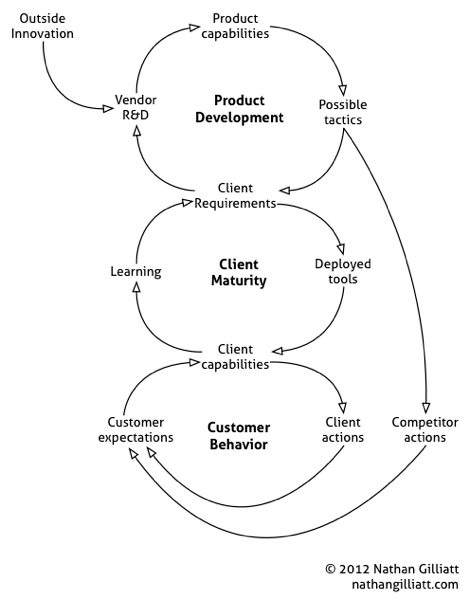

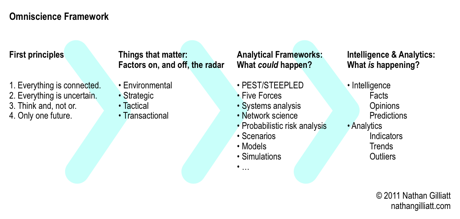

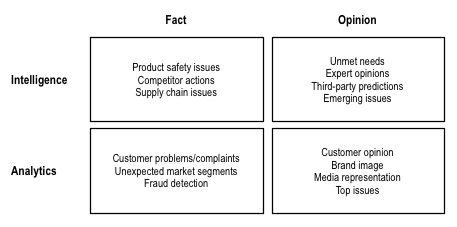

In a finite world, individuals specialize, but organizations don't have the same limitations. Given enough specialists, you can do it all. The challenge is in

In a finite world, individuals specialize, but organizations don't have the same limitations. Given enough specialists, you can do it all. The challenge is in