-

A few things to consider if you've decided to hire your first social media specialist.

February 2008 Archives

Are you paying attention to The Associated Press v. Moreover Technologies, Inc. et al? I heard about it while interviewing the founder of a different company for the Guide to Social Media Analysis, my reference to the companies who monitor and measure social media. He was telling me that his company provides summaries and links back to original sources, in order to avoid the risk of copyright infringement issues. The interesting thing is, I had just heard from another company that they selected a data vendor specifically because of the full text clips in their feed.

So, what's the deal with aggregating media content for a commercial service? Does blog aggregation with full content feeds violate copyright? Is it a question of fair use (US—fair dealing elsewhere), or is there more to consider? I asked Eric Goldman, Assistant Professor and Academic Director of the High Tech Law Institute at Santa Clara University School of Law, who started by telling me, "the law in the area is complicated, multi-faceted and unclear."

Great. So much for wrapping things up with a tidy stroll through fair use considerations.

In addition to copyright, Goldman suggested these areas of potential concern (the usual disclaimers apply: this is not legal advice; check with your own lawyers):

- Common law trespass to chattels

- Computer Fraud & Abuse Act

- State computer crime laws

- Contracts

- Trademark

Still with me?

So far, this is just the US perspective on an inherently international activity. My blog post was threatening to turn into a book, which I'm not even qualified to write (but I might want to read). So, let's go back to the current case that opened the topic, AP v. Moreover, or the Case of the Purloined Press. For extra credit, read the complaint (PDF).

This case isn't about social media monitoring; it's about redistributing traditional media content without a license. But it has similarities to other forms of media monitoring, in that a company is aggregating content for commercial purposes. How can a company avoid trouble while providing commercial content aggregation, and how does this translate to social media content with its millions of independent sources?

Potential solutions

One possible solution is to license the content. It's an established practice with traditional media that sets the terms of use, but it's not practical for decentralized, online media. Excerpting is another potential solution, which is being tested in the current case. The addition of metrics to raw content may help support a fair use/fair dealing argument. But with the unsettled state of the law, solutions are likely to be complicated and unclear, too.

When you get into the wilderness of social media sites, you encounter copyright, Creative Commons and terms of use that vary by site. This could be interesting. Oh, and complicated—and risky. We're not done with this topic, but for now, there's an ongoing case worth watching. I have my search feed running. Do you?

IMHO, IANAL, YMMV. I took an excellent course on communications law in grad school, and I enjoy a good conversation about policy, but I'm not a lawyer. You'd be an idiot to take anything I write or say as legal advice.

News from the companies of social media analysis.

Companies and services

- 25 February - Acccelovation changed its name to NetBase and announced illumin8, a research tool for scientific and technical users. Illumin8 combines NetBase text-mining and search technologies with Elsevier's archives of scientific journals, patents and web-based content. press release (via Michael Osofsky)

- 25 February - Andy Beal launched Trackur, an online reputation monitoring tool. Separately, Radically Transparent: Monitoring and Managing Reputations Online

, the book he wrote with UNR professor Judy Strauss, has just been released.

- 28 February - Filtrbox announced it has closed its seed financing round and opened its private beta.

- Changes at Brandintel: Bradley Silver now shares the CEO role with Roberto Drassinower, who takes operational leadership as Silver focuses on strategy. Sherry Harmon joins as senior VP for sales and marketing. Harmon was previously president of NextNine, a remote support automation provider.

- TNS MI/Cymfony released Harnessing Influence: How Savvy Brands

are Unleashing the New Power of Blogs and other Social Media, a report based on interviews with 71 marketing executives in the US, UK, Canada and France. Highlights in AdWeek and New Communications Review. - If you're a Super Bowl advertiser (or agency involved in advertising at this year's game), Collective Intellect wants to give you a Super Bowl Advertiser Scorecard. A public report will be released in about a week.

Events

Tags: social media analysis brand monitoring

The March/April issue ofTechnology Review has an interesting piece on visualizing social networks (via Matt Hurst). All of the examples would make appropriately geeky wall charts or desktop backgrounds, but the one that caught my eye is the one that adds color to a social network chart to illustrate comment activity.

The layout is typical social network analysis—hubs and spokes. But the Comment Flow visualization, created by Dietmar Offenhuber and Judith Donath at the MIT Media Lab, is based on communication:

The layout is typical social network analysis—hubs and spokes. But the Comment Flow visualization, created by Dietmar Offenhuber and Judith Donath at the MIT Media Lab, is based on communication:

Offenhuber and Donath created these images by tracking where and how often users left comments for other users; connections are based on these patterns, rather than on whether people have named each other as "friends." As the time since the last communication grows, the visual connection begins to fade.You'll want to go through the whole article. In addition to an application idea for the Comment Flow visualization, the article has examples of visualizations based on blog links, Twitter, social networks in the enterprise and viral marketing.

Nice of 'em to point out some of the researchers working on this stuff, isn't it?

-

Handy map showing which social networks are popular in different parts of the world.

-

Tactics for proving the value of analytics to skeptical management.

-

Brandintel study shows the connection between positive buzz and sales is, at best, indirect.

-

Sales, management and finance advice for SaaS businesses.

-

Lee's taking the lead on organizing our unconference. He shares an update on his blog.

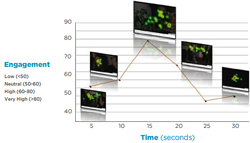

I follow discussions of web analytics only peripherally, but it's hard to miss the continuing discussions of how to define engagement, including equations with a lot of terms or a few. Meanwhile, I'm constantly checking out new (to me) companies that may be doing something I need to know about. This week, I discovered a company with a more, um, direct method for measuring audience engagement.

Forget the analytics definitions and think about what engagement really means. Focus. Attention. Tunnel vision. Racing pulse. Sweaty palms. You know when you're engaged in something. And the analytics discussions generally start from a definition close to the plain-English meaning before setting off to discover a method to determine engagement based on observable web behavior.

Innerscope Research goes back to the original definition by observing physiological responses to media. They connect test audiences to biometric sensors that measure respiration, motion, heart rate and skin conductance, with eye-tracking to track attention.

Innerscope Research goes back to the original definition by observing physiological responses to media. They connect test audiences to biometric sensors that measure respiration, motion, heart rate and skin conductance, with eye-tracking to track attention.

Once the test subject is suited up, Innerscope can observe what the subject is watching and the subject's response. Physiological reponses are faster and more accurate than conscious answers, and the metering supports an analysis across the duration of the media exposure. The basic logic is simple: if the content makes you sweat or raises your pulse, you're engaged.

Attention + Intensity = EngagementInnerscope has run experiments with both TV ads and viral videos. While the engagement vest isn't going to become standard attire for media consumers, it does suggest a possible test for competing definitions based on more convenient data sources.

-

Helpful outline for getting started without skipping a step.

-

A look at sentiment analysis from a BI perspective.

Well, that was a nice way to start the week! Jonny Bentwood posted his Top 100 analyst blogs list today, and the Net-Savvy Executive made the list at number 31. Really, I just hoped to make the list, and once I knew it was there (based on a vanity feed), I started looking from the bottom. I assumed it would be in the 90s, so it took a while to find my entry. So one event, two nice surprises.

Well, that was a nice way to start the week! Jonny Bentwood posted his Top 100 analyst blogs list today, and the Net-Savvy Executive made the list at number 31. Really, I just hoped to make the list, and once I knew it was there (based on a vanity feed), I started looking from the bottom. I assumed it would be in the 90s, so it took a while to find my entry. So one event, two nice surprises.

News from the companies of social media analysis.

Companies and services

- 13 February - SkyGrid launched its real-time information platform for institutional investors. The service tracks online and traditional media sources, adding reputation, sentiment and other media metrics. Coverage is limited to publicly-traded US companies for now. (via GigaOm)

- Todd Friesen joins Visible Technologies as VP of Search Strategies. Friesen will lead the company's reputation management and SEO activities and contribute to product development. press release

- TNS MI/Cymfony is presenting a free webinar to discuss the results of their recent study on social media in business, 28 February at 1:00 PM EST (GMT -5). Cymfony's Jim Nail leads the session, which focuses on incorporating social media tools in marketing strategies. announcement

- EuroBlog 2008 will be held in Brussels, 13–15 March. The conference theme is "Social media and the future of PR," and the program is dominated by agencies and academics. A session on the first day includes discussion of how to measure social media.

Tags: social media analysis brand monitoring

News from the companies of social media analysis.

Companies and services

- 8 February - Gianandrea Facchini launched Buzzdetector, an Italian social media monitoring company.

- 7 February - Sentiment Metrics launched a hotel review monitoring service that tracks major travel and hotel review sites as well as the overall social media environment.

- Biz360 and Visible Technologies were recognized among this year's OnMedia 100.

- Aberdeen Group released Social Media Monitoring and Analysis: Generating Consumer Insights from Online Conversation, a sponsored report on client objectives and practices in social media analysis.

- Admap published "Customer advocacy metrics: the NPS theory in practice," by Justin Kirby and the Alain Samson. The report on the usage of customer advocacy metrics in UK companies is available for a limited time from DMC.

- Collective Intellect released mini reports on Super Bowl advertising and the Super Tuesday primary results.

- The list of accepted papers that will be presented at ICWSM, 31 March–2 April in Seattle, is now available.

Tags: social media analysis brand monitoring

Funny thing about "conversations" on blogs, sometimes you have to bounce around different sites to follow along. Yesterday, I wrote Combining social media and traditional research, a follow-up to Validating social media data (it got a little heavy, I know—go watch the video again if you need a break). Today I'll give you a few links to posts that I think are related.

- Guy Hagen posted at length on the strengths and weaknesses of social media research, as well as some specific ideas on combining social media and traditional research. If you wanted to outline the arguments around the new techniques, these will give you a good start.

- Jim Nail responded to Peter Kim's Predicting Super Tuesday winners with brand monitoring with Is prediction even the point of social media? Clearly, some companies are more committed to the use of social media analysis as a research methodology than others. The differences are what make the space interesting.

- The question of how well blogs (especially) and other social media represent the overall population came up last year, with several companies preferring to study online communities over blogs for just that reason.

I'm trying a new experiment, and I'm interested in your reaction. I've been publishing news from social media analysis companies for a while, and I occasionally hear about jobs they want to fill. So I've set up a job board to focus on social media jobs, especially jobs in social media analysis.

Listings are normally $50 for 60 days, but to get things started, you'll get an 80% discount when you post a job by March 31st with the discount code sma2008.

I'll highlight open listings in a blog post each week, either in the industry news post or in a separate post (I haven't decided that detail yet).

One limitation that I hope to address: the hosted job board system is set up for jobs in the US. The board was inspired by an opening in Asia, and I'd like the board to reflect my international coverage of the industry. So I'm looking for a solution.

One of my goals with this blog is to be read by everyone in social media analysis. Seems like a likely audience for a job board focused on the same space.

Here's one way to validate the results of your social media research: follow it up with a traditional research project. I was talking with Sangita Joshi from EmPower Research this morning, and I learned that some clients are using social media analysis is just this way.

Using both social media analysis and traditional research methods to explore the same topic may seem (a) redundant or (b) an admission of problems with social media analysis, but the combination has the potential to play to the strengths of each.

- Social media analysis can uncover new issues. The usual examples in support of listening to social media involve problems that companies didn't know about. Identifying topics in social media may raise issues for further exploration.

- Conclusions from social media analysis can be restated as hypotheses for traditional research. Critics who don't accept the validity of results from text analysis won't mind if the results are presented as hypotheses.

- Traditional research methods bring established techniques for determining the validity of the results. The Old School will be happy.

- Combining traditional research and social media analysis creates an opportunity to compare the results of both methods. What if you measured the degree to which the online universe mirrored the real world?

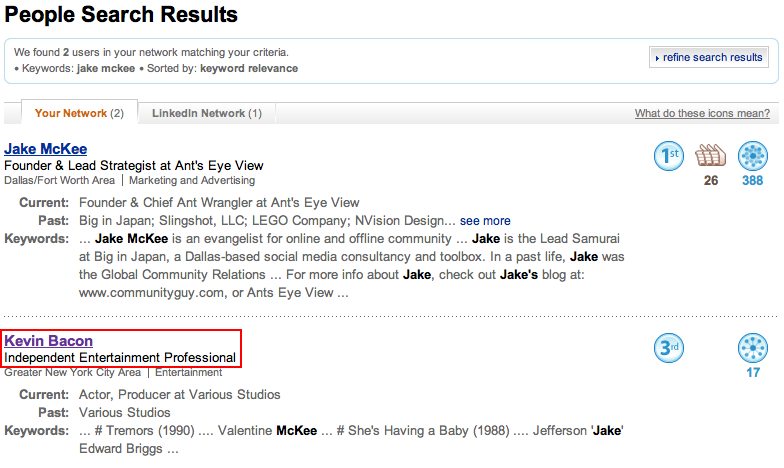

This is just too random to keep to myself. You know the game people play, Six Degrees of Kevin Bacon? This morning, I searched LinkedIn for Jake McKee (making sure we had connected in LI), and I got two results in my network: Jake (no surprise) and Kevin Bacon.

Really, I wasn't looking for a parlor trick, but this may just turn out to be a useful illustration of social networking.

-

Great summary of observations about working with communities--costs, benefits, strategies.

-

The next time someone questions the data behind your chart, just send 'em to this video.

It's possible that my earlier post today (Validating social media data) was a bit on the heavy reading side. All this talk of data, methodology, validation—it's exhausting. So here's a bit of inspiration from the Onion. The next time someone questions the data behind your chart, hit 'em with this.

Breaking News: Series Of Concentric Circles Emanating From Glowing Red Dot

(Hat tip to Andrew Vande Moere)

Generating quantitative observations from unstructured data in social media is new. So, surprise, it's a field that doesn't have mature standards yet. Really, we don't even have accepted definitions yet, because mainstream marketers—who are still hearing that they need to start listening—don't know enough about social media practices to drive standardization. It's not too early for challenges to the validity of the data, however.

Social media analysis is not (usually) survey research

Because it's not widely understood, and the discussion has tended to focus on the benefits of listening, social media analysis is sometimes criticized for not following the standards of other types of research. George Silverman wrote a good example comparing online and traditional focus groups, for example.

Justin Kirby took a different swing at social media measurement, comparing data mining to survey research:

Just look at buzz monitoring practitioners who place great stock in sentiment analysis, but have none of the usual checks and balances (such as standard deviation) that underpin data validity within traditional research. If you can't calculate any margin of error, let alone show that you're listening to a representative sample of a target market, then how can you really prove that your analysis is sorting the wheat from the chaff and contributing valuable actionable data to your client's business?(Justin has points worth pondering in the rest of his article, so go read it. I did note that marketers became advertisers early in the article, which suggests a partial answer to his complaint.)

Traditional research is based on sampling, where tests to determine the validity of the sample data are crucial (and, typically, poorly understood). Most social media analysis vendors are using automated methods to find all of the relevants posts and comments on a topic, which go into their analytical processes.

Testing the results

I won't argue against the idea of tests to validate the data, but tests created for surveys and samples aren't necessarily relevant to new techniques. The question is, what's the right test of a "boil the ocean" methodology? Here are some of the challenges, which are different from "is the sample representative?"

- How much of the relevant content did the search collect (assuming the goal is 100%—if not, you're sampling)?

- How accurately did the system eliminate splogs and duplicate content?

- How accurately did the system identify relevant content?

- How accurate is the content scoring?

Ideally, results would be reproducible, competing vendors would get identical results, and clients would be able to compare data between vendors. Theoretically, everyone is starting with the same data and using similar techniques. All that's left is standardizing the definitions of metrics and closing the gap between theory and practice. Easy, right?

-

All those years of struggling against the expectation of identifying a pigeonhole...