I sometimes summarize the opportunity of social media analysis as using computers to "read the Internet." It's not an original idea, but it is one we still haven't mastered. I've seen many tools that find relevant content and apply some level of automated analysis, but we're not about to replace the analyst. One simple question I've started to think about is, "then what happens?"

The SocialSpook 9000 reads millions of blog posts, Facebook updates, and tweets every second. It finds every relevant mention in your space, extracting the facts, opinions, and needs that you're looking for. Its sentiment analysis engine provides 120% accuracy in 38 languages, and its graphics are so well designed that whole new awards contests have been created for it to win.

In 2007, I pointed out the need to link social media monitoring to customer service, because most of the problems that people were seeing as PR problems started with unhappy customers. Since 2010, I've been thinking about another application: blending social media data with other publicly available sources to create an automated view of what's happening in the world. It turns out to be a big challenge.

My own private news channel… or command center?

We can take this in several directions. At the low end, applications such as Flipboard generate personalized media based on activity in the user's social media accounts and selected topics or sources. In the middle, we might have a more dynamic version of the social media dashboard running in the conference room or reception area. It's the web-powered news channel that always shows something you might care about.

At the high end, we're looking at a valuable—but noisy and sometimes misleading—source of crowdsourced information about events in near-real time. The obvious applications are in government: national security, law enforcement, emergency management, and disaster response agencies are looking for fast and accurate information from social media sources. I see value in corporate applications, too, for functions like security, risk management, logistics, and business continuity that need information when things happen. Preferably without hiring an army of analysts to look at dashboards on the quiet days.

Now what happens?

The challenge in using social media for real-time awareness is that the volume level becomes overwhelming just when the information becomes most valuable. Forget looking for the needle in a haystack; this is the needle in the needlestack. Faster than you can read them, more messages arrive, and they're all relevant.

Existing tools generally emphasize either handling messages individually (think customer service or community engagement) or analyzing them in aggregate (think sentiment and leading topics). For this application, we want the system to help analysts deal with the volume without losing the detail, and that's where I started asking about what happens next.

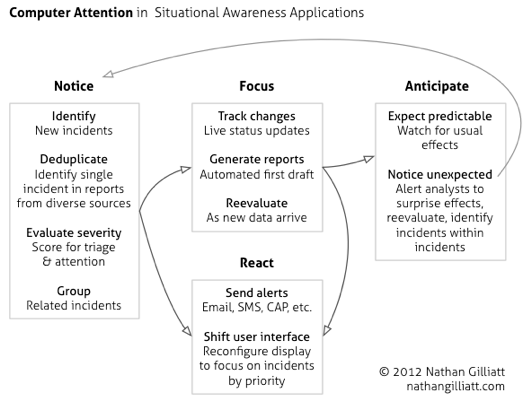

For all the systems that can notice something happening and put it on a screen, I wanted a system that can notice and pay attention. So what would that look like?

Here's an idea (click to enlarge):

The inputs to this system can go beyond social media content; depending on your application, it might pick up data about natural disasters, weather, or market data. It might incorporate traditional news media, commercial intelligence services, or internal data. Its models will reflect the needs of its users, so a system that looks for, say, transportation-related incidents could be quite different from one looking for damage reports in weather emergencies.

This has a lot of moving parts, and it builds on what others have already built. The central idea is to go beyond the dashboard and think about how the system can relieve analysts of some of the burden of reading the alert queue. Step one is to consider what an analyst does with that information and how a computer could mimic that.

I'm sharing some of the frameworks that have been hiding on my whiteboard. Want the long version? Email me.