Measuring Social Media must be the new black. Everybody's doing it—the in-crowd is, at least, and the rest are starting to realize they're missing something. Just look at the agencies who've suggested their own take on the little black dress—that is, on how marketers should measure social media.

Measuring Social Media must be the new black. Everybody's doing it—the in-crowd is, at least, and the rest are starting to realize they're missing something. Just look at the agencies who've suggested their own take on the little black dress—that is, on how marketers should measure social media.

- Conversation Impact, Ogilvy PR

Ogilvy proposes a framework with three sets of metrics that correlate to the traditional marketing funnel: Reach and positioning, based on a combination of web analytics, media analysis, and search visibility; Preference, based on media analysis and traditional research; and Action, based on measurable client objectives (such as sales conversions).

- Social Influence Measurement, Razorfish (with TNS Cymfony and Keller Fay Group)

The SIM score, as introducing in the Fluent 2009 report, compares sentiment for a company to sentiment for its industry. The report also mentions share of voice and weighting for influence, although the formulas for the metric do not.

- Digital Footprint Index, Zócalo Group (with DePaul University)

Evaluate a brand's online presence in three dimensions: Height, which represents the total volume of brand mentions; Width, based on consumer engagement with online content; and Depth, based on message saturation and sentiment.

Three agencies, three models that fit fairly neatly into

measurement silos. I've grouped them on the somewhat arbitrary basis that they're all backed by marketing agencies, but they're not answering the same question, are they?

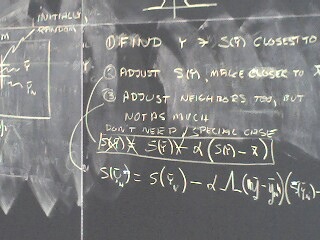

It was my understanding that there would be no math.

—Chevy Chase, as Gerald Ford

Breaking eggs, making omeletsOgilvy's Conversation Impact tracks marketing effectiveness with its explicit alignment with the marketing funnel. I like that the framework intermingles different sources of data, including traditional research. The Action category, linking measurement to specific business outcomes, should help keep strategy and measurement on point.

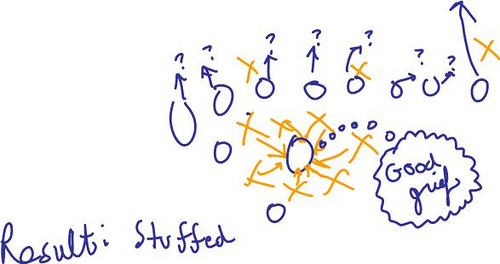

Razorfish's SIM score is all about perception. How does the client look compared to its competitors and industry? Despite the "influence" in its title, this score is entirely about sentiment, with none of the usual indicators of influence. As a single metric, the SIM should be compared with the Net Promoter Score or MotiveQuest's Online Promoter Score, but I'll be honest here. I'm having trouble figuring out the significance of this ratio of ratios. I tried a few scenarios to get a sense for how the numbers change and got some strange results: divide by zero errors, negative scores... The intermediate Net Sentiment metric is the more meaningful number here.

Zócalo's DFI addresses PR effectiveness, as telegraphed by the "earned media" headline in the announcement. The focus on presence, engagement and sentiment pick up on important aspects of social media, but without more detail on the math behind the overall index value, this seems like another framework rather than a metric.

What are we measuring, exactly?

I'm not the first to say it: the golden metric that will answer every question does not exist. To be fair, the authors of these examples don't claim to have the ultimate answer, anyway. Social media initiatives can support diverse objectives, and so the metrics used to evaluate those initiatives or to answer questions will be equally diverse. But it is nice that we have people sharing their efforts to find appropriate metrics for some common objectives and questions. Thank you, and keep it up.

While working through the math, I was reminded of an old trick from undergrad science classes: if you're losing track of your formula, do the math with the units included. If the resulting units don't make sense (comments^2, for example), the value won't, either.

Photo by alist.

All together, now: "Companies should

All together, now: "Companies should