As ubiquitous surveillance is increasingly the norm in our society, what are the options for limiting its scope? What are the levers that we might pull? We have more choices that you might think, but their effectiveness depends on which surveillance we might hope to limit.

As ubiquitous surveillance is increasingly the norm in our society, what are the options for limiting its scope? What are the levers that we might pull? We have more choices that you might think, but their effectiveness depends on which surveillance we might hope to limit.

One night last summer, I woke up with an idea that wouldn't leave me alone. I tried the old trick of writing it down so I could forget it, but more details kept coming, and after a couple of hours I had a whiteboard covered in notes for a book on surveillance in the private sector (this was pre-Snowden, and I wasn't interested in trying to research government intelligence activities). Maybe I'll even write it eventually.

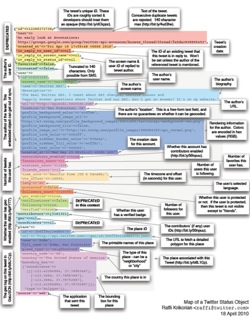

The release of No Place to Hide, Glenn Greenwald's book on the Snowden story, provides the latest occasion to think about the challenges and complexity of privacy and freedom in a data-saturated world. I think the ongoing revelations have made clear that surveillance is about much more than closed-circuit cameras, stakeouts and hidden bugs. Data mining is a form of passive surveillance, working with data that has been created for other purposes.

Going wide to frame the question

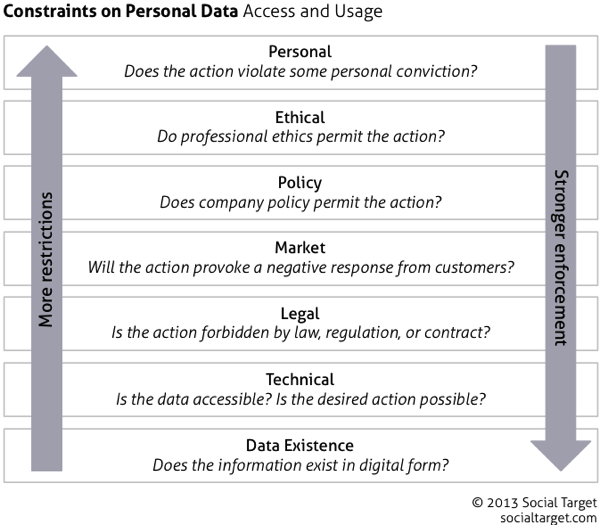

As I was thinking about the many ways that we are watched, I wondered what mechanisms might be available to limit them. I wanted to be thorough, so I started with a framework to capture all of the possibilities. Here's what I came up with:

The framework is meant to mimic a protocol stack, although the metaphor breaks down a bit in the higher layers. The lowest layers provide more robust protection, while the upper layers add nuance and acknowledge subtleties of different situations. Let's take a quick tour of the layers, starting at the bottom.

Hard constraints

The lowest layers represent hard constraints, which operate independently of judgment and decisions by surveillance operators:

- Data existence

If the data don't exist, they can't be used or abused. Cameras that are not installed, microphones that are not activated do not collect data. Unposted travel plans do not advertise absence; non-geotagged photos and posts are not used to track individual movements. At the individual level, countermeasures that prevent the generation of data exhaust will tend to defeat surveillance, as will the avoidance of known cameras and other active surveillance mechanisms. - Technical

Data, once generated, can be protected, which is where much of the current discussion focuses. Operational security measures—strong passwords, access controls, malware prevention, and the like—provide the basics of protection. Encryption of stored data and communication links increase the difficulty—and cost—of surveillance, but this is an arms race. The effectiveness of technical barriers to surveillance depends substantially on who you're trying to keep out and the resources available to them.

The upper layers represent soft constraints—those which depend on human judgment, decisionmaking and enforcement for their power. Each of these will tend to vary in its effectiveness by the people and organizations conducting surveillance activities.

- Legal

This is the second of two layers that contain most of the ongoing discussion and debate, and the default layer for those who can't join the technical discussion. The threat of enforcement may be a deterrent to some abuse. Different laws cover different actors and uses, as illustrated in the current indictment of Chinese agents for economic espionage. - Market

In the private sector, there's no enforcement mechanism like market pressure—in this case, a negative reaction from disapproving customers. Companies have a strong motive to avoid activities that hurt sales and profits, and so they may be deterred from risking a perception of surveillance and data abuse. This is the layer least likely to be codified, but it has the most robust enforcement mechanism for business. In government, the equivalent constraint is political, as citizens/voters/donors/pressure groups respond to laws, policies and programs. - Policy

At the organization level, policy can add limits beyond what is required by law and other obligations. Organization policy may in many cases be created in reaction to market pressure and prior hard lessons, extending the effectivenes of market pressure to limit abusive practices. In the public sector, the policy layer tends to handle the specifics of legal requirements and political pressures. - Ethical

Professional and institutional ethics promise to constrain bad behavior, but the specific rules vary by industry and role, and enforcement is frequently uncertain. Still, efforts such as the Council for Big Data, Ethics, and Society are productive. - Personal

Probably the weakest and certainly the least enforceable layer of all, personal values may prevent some abuse of surveillance techniques. Education and communication programs could reinforce people's sensitivity to personal privacy, but I include this layer primarily for completeness. Where surveillance operators are sensitive to personal privacy, abuses will tend not to be an issue.

One step at a time

This framework isn't meant to answer the big questions; it's about structuring an exploration of the tradeoffs we make between the utility and the costs of surveillance. Even there, this is only one of several dimensions worth considering. Surveillance happens in the private sector and government, both domestically and internationally. There's a meaningful distinction between data access and usage, and different value in different objectives. Take these dimensions and project them across the whole spectrum of active and passive techniques that we might call surveillance, and you see the scope of the topic.

Easy answers don't exist, or they're wrong. It's a complex and important topic. Maybe I should write that book.

If I write both the surveillance book and the Omniscience book (on the value that can be developed from available data), should I call them yin and yang?

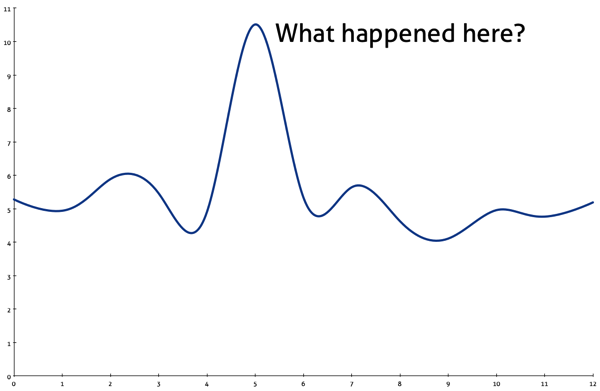

Everyone loves a chart that answers a key question, but I particularly like the ones that make you think: Why did that happen? What changed? What are we missing? What happens next?

Everyone loves a chart that answers a key question, but I particularly like the ones that make you think: Why did that happen? What changed? What are we missing? What happens next?

It's bad enough when

It's bad enough when  Before you can pull insights from your data, you need data, but I'm hearing more concerns about data quality in social media analysis lately. Before, people asked about the traditional tradeoff in text queries: finding relevant content while excluding off-topic content. Lately, I'm hearing more about social data that's

Before you can pull insights from your data, you need data, but I'm hearing more concerns about data quality in social media analysis lately. Before, people asked about the traditional tradeoff in text queries: finding relevant content while excluding off-topic content. Lately, I'm hearing more about social data that's

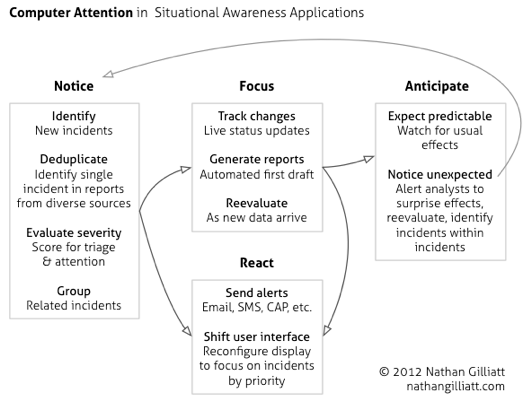

Quiz: A government agency wants to monitor social media in the course of performing its function. Is that an obvious use of public information, or further evidence of a dark conspiracy? Oh, good, I see lots of hands for both answers. Let's look at what's really going on here.

Quiz: A government agency wants to monitor social media in the course of performing its function. Is that an obvious use of public information, or further evidence of a dark conspiracy? Oh, good, I see lots of hands for both answers. Let's look at what's really going on here.