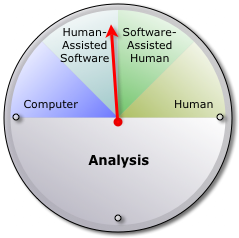

How do you like your social media analysis? Do you want the speed and scalability of an automated process, or do you prefer the subtlety and insight of a human analyst? Companies offering these services disagree on whether software or people are better at the task, and they're taking different approaches to answer the question.

First, let's define analysis. For this discussion, I'm talking about the process of rating individual items—posts, comments, messages, articles—on things like topic, sentiment and influence. Summarizing the data from many items and creating charts and reports come later.

The extremes

One end of the spectrum is fully automated analysis. Some companies have invested significant time and capital in systems that automate text analysis. They use terms like patent-pending and natural-language processing to describe software that "reads" and scores social media. Automated processes are usually—but not always—behind client dashboards.

The other end of the spectrum is human analysis, for those not convinced that computers can accurately rate written materials. These companies talk about human insight, subtlety and the ability to identify sarcasm. Some make a big deal of the quality of their employees, which makes sense, since their services are the product of their analysts' thought processes.

That's a fairly clear contrast, but it's not that simple. Human analysts benefit from the speed of computers, and automated processes benefit from occasional oversight. Which brings us to two hybrid forms that a number of companies have adopted.

Software-assisted human analysis

Software-assisted human analysis

The essence of human analysis is the decision making. It's not necessary to make the analyst do all the work when insight is the critical component. So some companies use software that organizes items and provides a user interface for the analyst. The system may even suggest preliminary scores for the analyst to confirm. Software-assisted human analysis uses the computer's speed to increase the efficiency of the human analyst.

Human-assisted software analysis

Software analysis is about speed, scale and predictability. The question is whether the resulting analysis is accurate enough to be useful. So some companies have human analysts audit the results. The process provides confirmation and feeds into machine-learning processes. Human-assisted software analysis uses human insight to check and improve the accuracy of the software.

Trends

In practice, most companies hedge their bets. Those with major software investments sell the benefit of automated analysis in their dashboards while offering human analysis and interpretation as separate services. In essence, they offer computer speed and scale and human subtlety and insight in separate packages. The human-analysis companies tend toward the software-assisted model for its efficiency benefits. Almost everyone offers custom research based on the combination of human insight and analytical software. When it's time to crunch the data, everyone seems to agree on that particular combination.

Related: